Research Design Types: Experimental vs Observational Methods (2026)

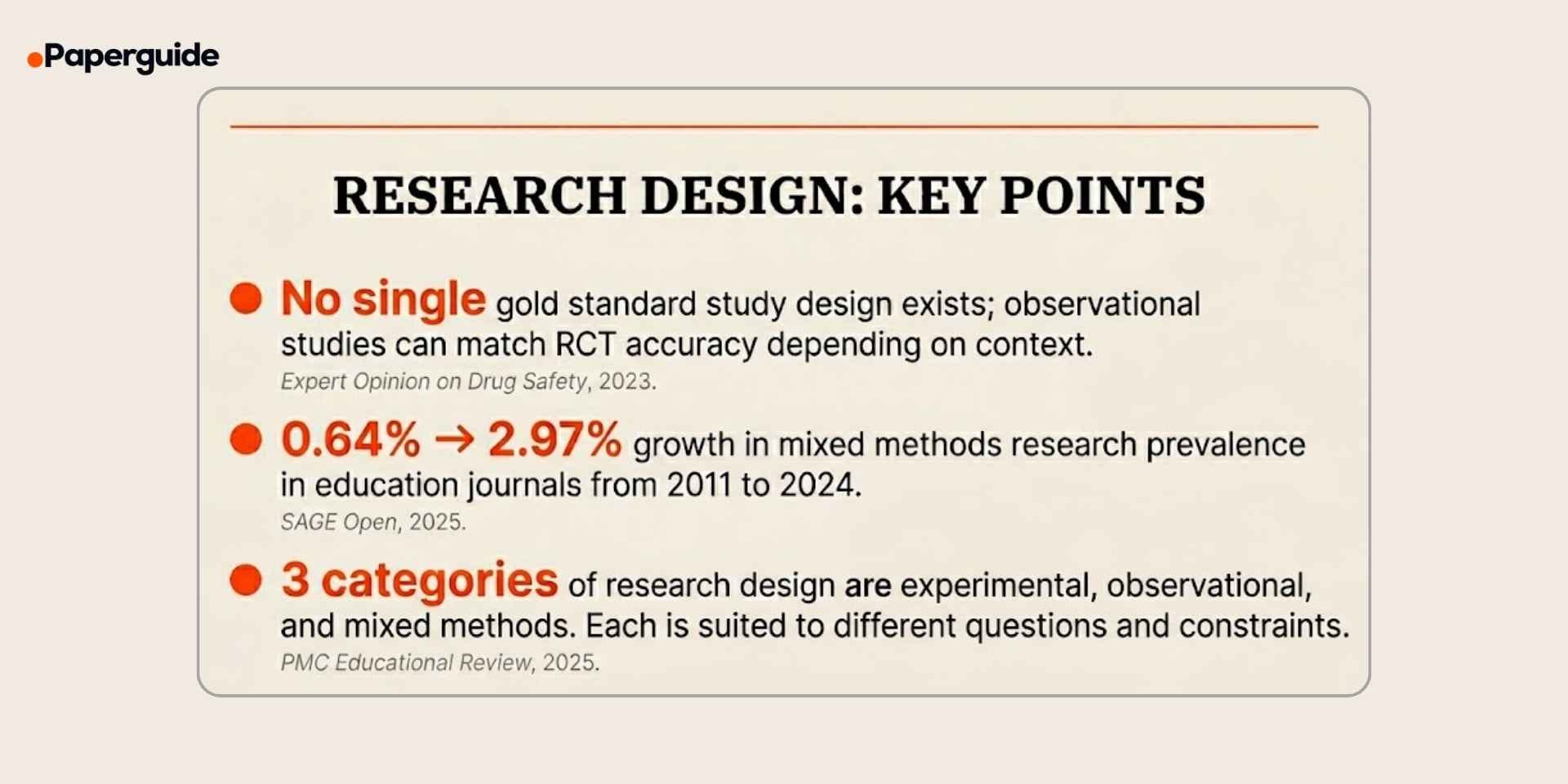

Research design is the blueprint that determines how a study collects, analyzes, and interprets data. Choosing the wrong design undermines every subsequent decision in the research process, from sampling to statistical analysis to the strength of conclusions. The stakes are high: a 2023 analysis in Expert Opinion on Drug Safety argued that there is no single gold standard study design, challenging decades of assumption that randomized controlled trials are always superior by demonstrating that observational studies can match or exceed RCT accuracy depending on the research context. [1]

At the same time, research design is becoming more methodologically diverse. A 2025 systematic review in SAGE Open found that mixed methods research in educational sub-disciplines grew from 0.64% of published articles in 2011 to 2.97% in 2024, reflecting a steady shift toward combining quantitative and qualitative approaches. Meanwhile, a 2025 introduction to quasi-experimental design in PMC highlighted a resurgence of non-randomized approaches as ethical constraints and real-world complexity make fully randomized experiments infeasible for many research questions.[2] [3]

This guide covers the three major categories of research design, experimental, observational, and mixed methods, explains their sub-types, compares their strengths and limitations, and provides a step-by-step process for selecting the right design for your study.

Key Takeaways

- Research design determines how data is collected, analyzed, and interpreted — choosing the wrong one invalidates the entire study.

- There is no single gold standard design. The best choice depends on the research question, ethical constraints, and available resources. [1]

- Experimental designs establish causation through manipulation and control. Observational designs describe patterns without intervention. Mixed methods combine quantitative and qualitative approaches.

- Mixed methods research grew from 0.64% to 2.97% of published articles in education journals between 2011 and 2024. [2]

- Quasi-experimental designs are gaining traction as ethical and practical alternatives to full randomization. [3]

- Use the selection checklist and template in this guide to match your research question to the right design.

What Is Research Design?

Research design is the overall plan or framework that guides how a study will answer its research question. It specifies the type of data to collect, the methods for collecting it, and the procedures for analyzing it. A research design is not the same as a research method; methods are specific techniques (surveys, interviews, experiments), while design is the overarching strategy that determines which methods to use and how to combine them.

Every research design must address four fundamental questions:

- What type of data is needed (quantitative, qualitative, or both)?

- From whom will data be collected (population, sample, sampling method)?

- How will data be collected (instruments, procedures, timeline)?

- How will data be analyzed (statistical tests, thematic analysis, integration procedures)?

The choice of research design depends on the nature of the research question, the level of control the researcher has over variables, ethical considerations, and available resources. A causal question requires an experimental design. A prevalence question requires a cross-sectional observational design. A complex question that benefits from both statistical evidence and lived experience may require a mixed methods design.

Experimental Research Design

Experimental research designs are characterized by the researcher actively manipulating one or more independent variables and measuring the effect on one or more dependent variables, while controlling for extraneous factors. The defining feature of experimental design is intervention: the researcher introduces a change and measures what happens.

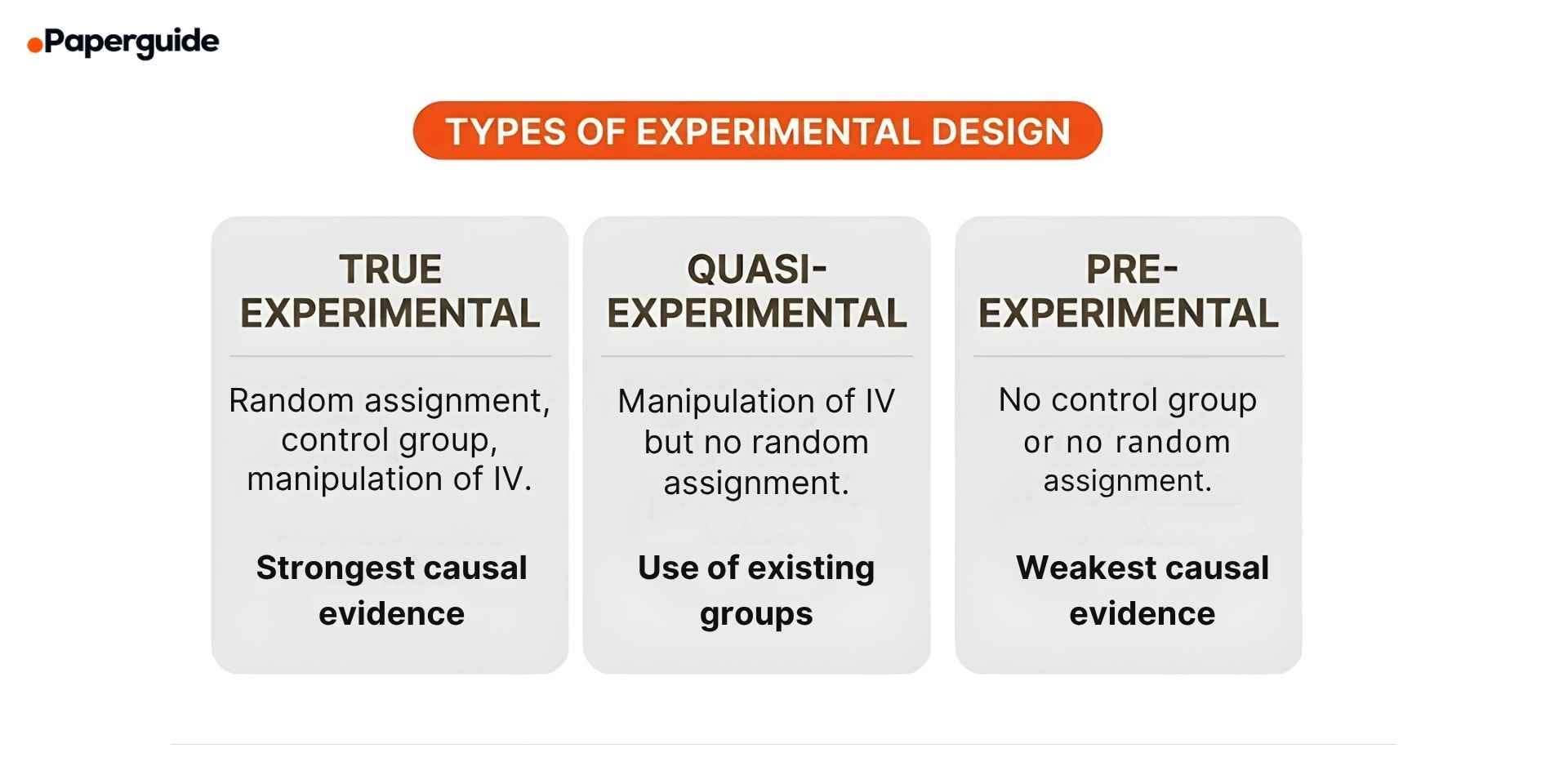

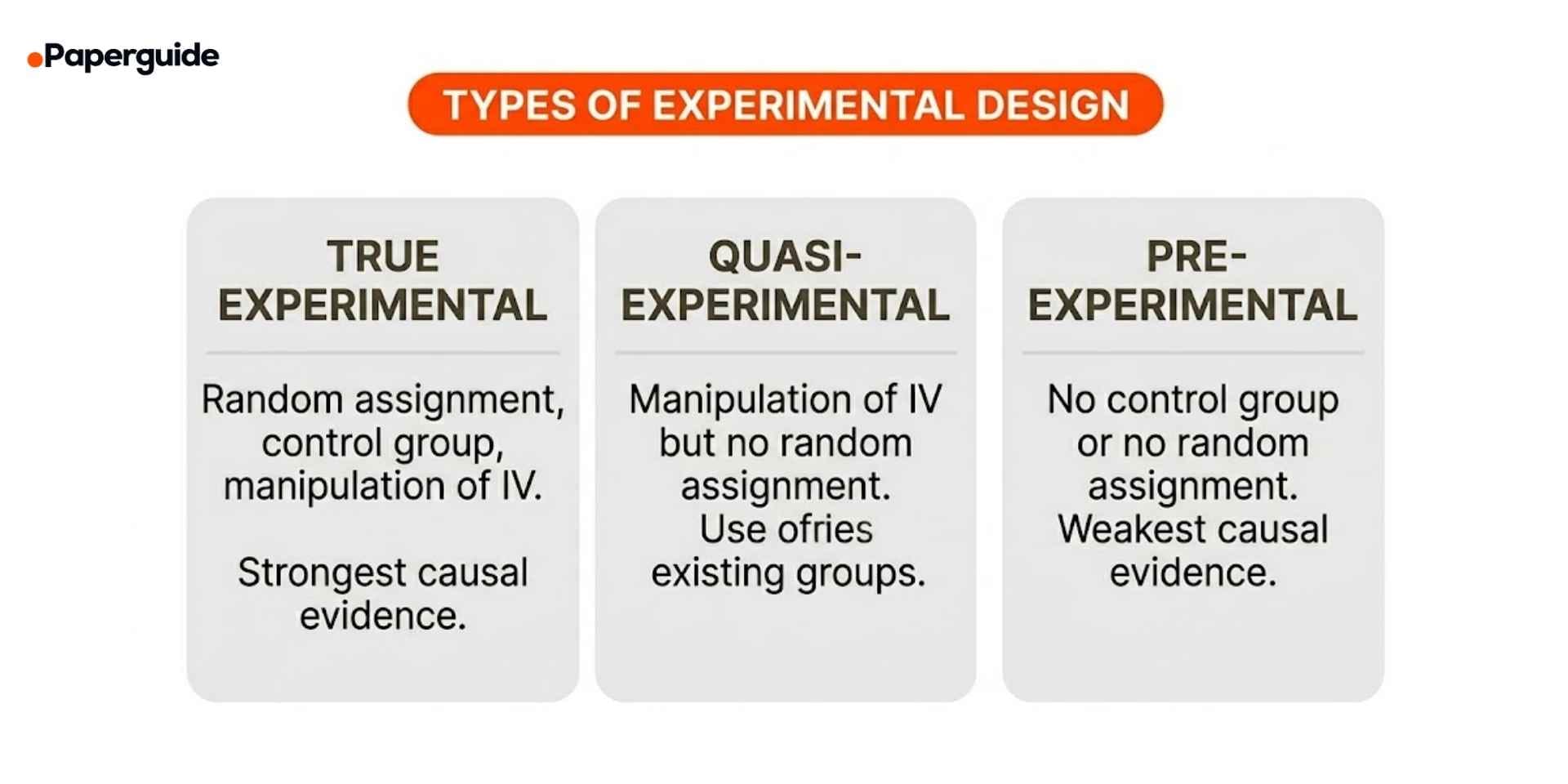

True Experimental Design

True experimental design is the strongest design for establishing causation. It requires three elements: manipulation of an independent variable, random assignment of participants to conditions, and a control group for comparison.

Example: A pharmaceutical company tests a new drug by randomly assigning 500 patients to either the treatment group (receives the drug) or the control group (receives a placebo). Neither patients nor administrators know which group receives which (double-blind). After 12 weeks, health outcomes are compared.

Strengths: Strongest internal validity. Random assignment minimizes confounding variables. Provides the clearest evidence for cause-and-effect relationships.

Limitations: Often impractical or unethical (e.g., you cannot randomly assign people to smoke to study lung cancer). Expensive and time-consuming. Artificial lab settings may reduce external validity.

Quasi-Experimental Design

Quasi-experimental design involves manipulation of an independent variable but lacks random assignment. Participants are assigned to groups based on pre-existing characteristics or practical constraints. This design is increasingly common as ethical review boards restrict random assignment in educational, clinical, and social research contexts. [3]

Example: A school district implements a new reading curriculum in five schools and uses five similar schools as a comparison group. Students were not randomly assigned to schools, but the outcomes are compared between the groups.

Strengths: More feasible and ethical than true experiments in many real-world settings. Can still provide useful evidence of causal relationships when combined with statistical controls.

Limitations: Weaker internal validity than true experiments because pre-existing group differences may confound results. Requires careful selection of comparison groups and statistical adjustments to address potential biases.

Pre-Experimental Design

Pre-experimental design is the simplest and weakest form of experimental design. It lacks either a control group, random assignment, or both. It provides preliminary evidence but cannot confidently establish causation.

Example: A company measures employee productivity before and after implementing a wellness program. There is no control group, so any observed change could be due to the program, seasonal variation, or other factors.

Strengths: Simple, fast, and inexpensive. Useful for pilot testing or generating initial hypotheses.

Limitations: Cannot control for confounding variables. Extremely limited internal validity. Results should be interpreted with caution and followed up with stronger designs.

Observational Research Design

Observational research designs involve the researcher collecting data without manipulating any variables. The researcher observes, measures, and records what naturally occurs. These designs are essential when experimental manipulation is impossible, unethical, or impractical.

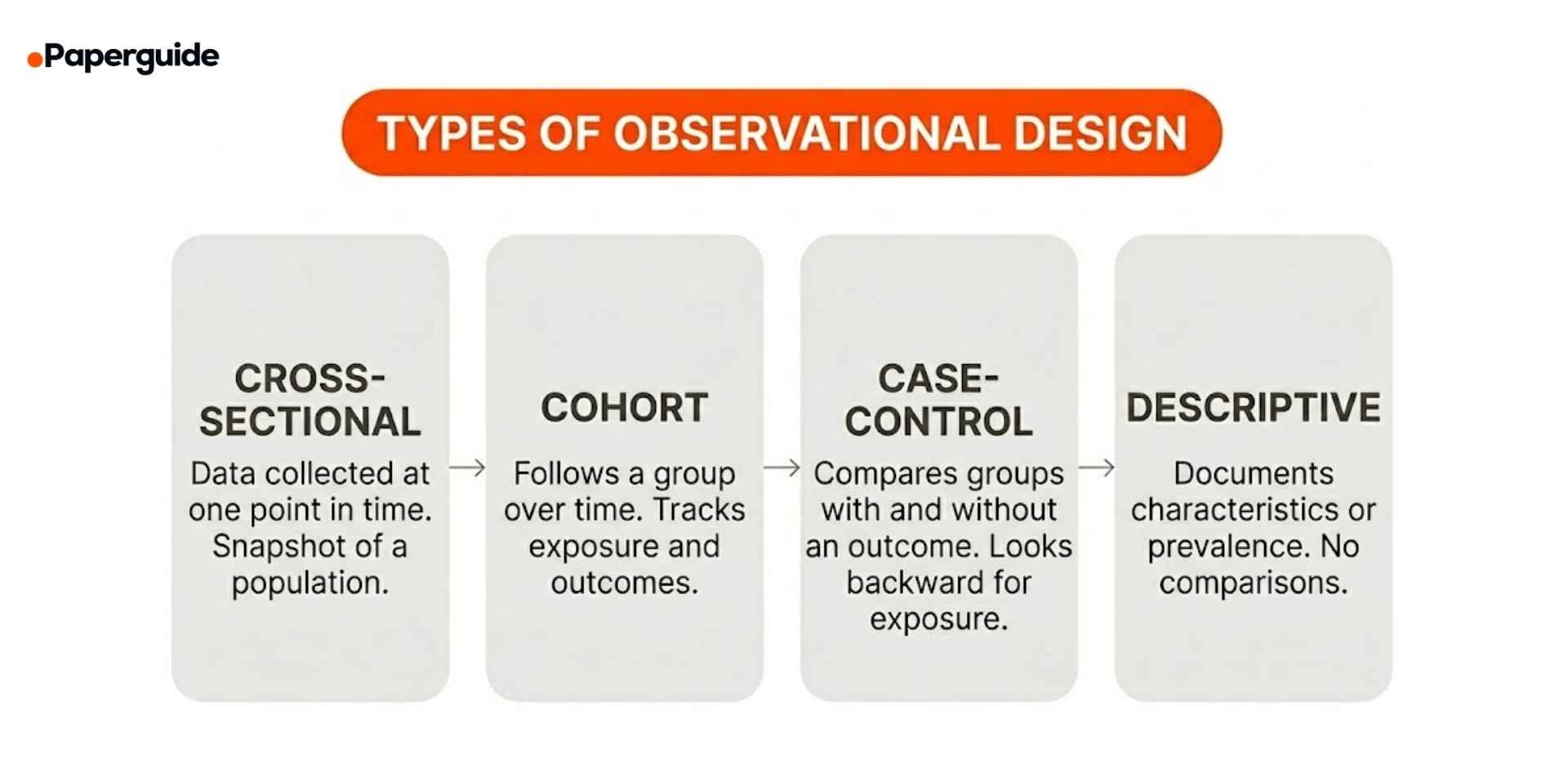

Cross-Sectional Design

Cross-sectional design collects data from a population at a single point in time. It provides a snapshot of the variables of interest and their relationships at that moment.

Example: A national survey measures the prevalence of burnout among nurses across 200 hospitals during March 2026. Data on burnout levels, work hours, and demographic variables are collected simultaneously.

Strengths: Fast, inexpensive, and straightforward. Good for estimating prevalence and identifying associations.

Limitations: Cannot establish causation or temporal sequence. A correlation between burnout and long work hours does not indicate which came first.

Cohort Design

Cohort design follows a group of individuals (a cohort) over time to observe how certain exposures or characteristics relate to outcomes. It can be prospective (following participants forward) or retrospective (analyzing existing records from the past).

Example: A prospective cohort study follows 10,000 adults who are currently healthy. At baseline, researchers document their exercise habits, diet, and smoking status. Over 20 years, the cohort is tracked for the development of cardiovascular disease, allowing researchers to compare disease rates between different exposure groups.

Strengths: Can establish temporal sequence (exposure before outcome). Useful for studying rare exposures or multiple outcomes from the same exposure.

Limitations: Expensive and time-consuming, especially prospective designs. Subject to attrition (participants dropping out over time). Confounding variables remain a concern.

Case-Control Design

Case-control design starts with two groups — one with the outcome of interest (cases) and one without (controls) — and looks backward to identify differences in exposures or risk factors.

Example: A study of lung cancer identifies 200 patients with lung cancer (cases) and 200 similar patients without lung cancer (controls). Researchers compare their past smoking history, occupational exposures, and family medical history to identify risk factors.

Strengths: Efficient for studying rare diseases or outcomes. Faster and less expensive than cohort studies. Requires smaller sample sizes.

Limitations: Vulnerable to recall bias (participants may not accurately remember past exposures). Cannot directly calculate disease incidence or absolute risk. Selection of appropriate controls is challenging.

Descriptive Design

Descriptive design documents the characteristics, behaviors, or conditions of a population without comparing groups or testing hypotheses. It answers "what is" rather than "why" or "how."

Example: A university conducts a survey documenting the demographic composition, enrollment patterns, and declared majors of its entire student body for the current academic year.

Strengths: Provides foundational data for future research. Useful for identifying patterns, generating hypotheses, and informing policy.

Limitations: Cannot test relationships or establish causation. Limited analytical depth.

Observational designs are foundational to fields where experimentation is impossible, and researchers increasingly use AI tools for research to efficiently analyze the large datasets that observational studies typically generate.

Mixed Methods Research Design

Mixed methods research combines quantitative and qualitative data collection and analysis within a single study or program of research. The rationale is that neither quantitative nor qualitative methods alone can capture the full complexity of a research problem, and combining them provides a more complete understanding.

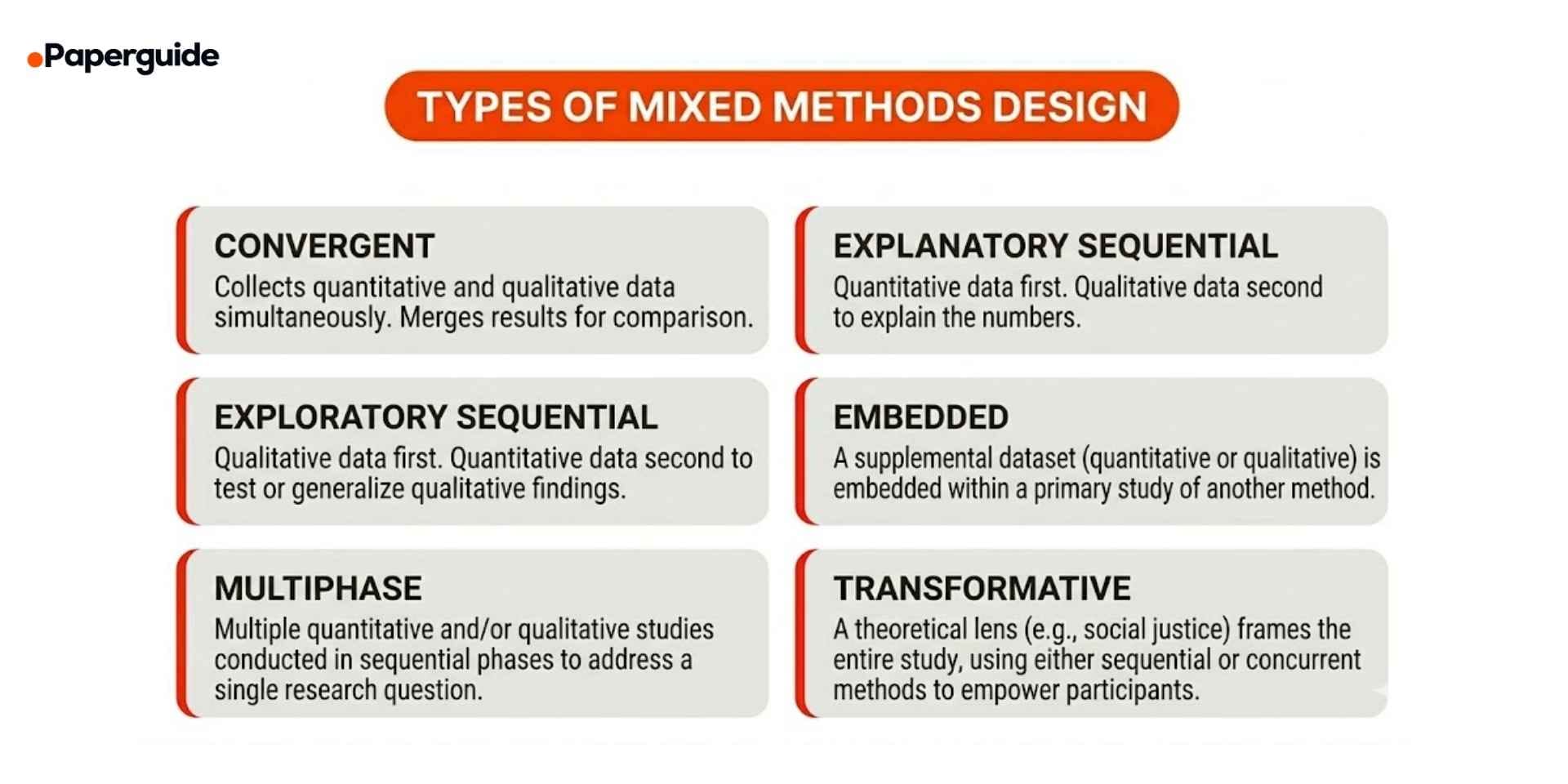

Convergent Design

Convergent design (also called parallel or concurrent design) collects quantitative and qualitative data simultaneously, analyzes them separately, and then merges the results to see whether they confirm, complement, or contradict each other.

Example: A study on patient satisfaction administers a standardized survey (quantitative) and conducts in-depth interviews (qualitative) with the same participants during the same period. Survey scores are analyzed statistically, interview transcripts are analyzed thematically, and the two sets of findings are compared.

Strengths: Provides a comprehensive view of the research problem. Allows for triangulation (verifying findings through multiple methods). Efficient because both data types are collected concurrently.

Limitations: Requires expertise in both quantitative and qualitative methods. Integrating the two datasets can be methodologically challenging, especially when results conflict.

Explanatory Sequential Design

Explanatory sequential design collects and analyzes quantitative data first, then uses qualitative data to explain, interpret, or contextualize the quantitative results.

Example: A survey of 1,000 teachers reveals that 38% report using AI tools in the classroom, but usage varies significantly by school district. To understand why, the researcher conducts follow-up interviews with 25 teachers from high-use and low-use districts.

Strengths: The qualitative phase directly addresses questions raised by the quantitative findings. Produces richer, more nuanced explanations than quantitative data alone. [4]

Limitations: Time-intensive (two sequential phases). Qualitative sample selection depends on quantitative results, requiring flexibility in the research plan.

Exploratory Sequential Design

Exploratory sequential design begins with qualitative data collection and analysis, then uses the qualitative findings to develop or inform a quantitative instrument or phase.

Example: A researcher studying workplace incivility conducts focus groups with employees to identify the types and contexts of incivility they experience. The themes from these focus groups are used to develop a new survey instrument, which is then administered to 500 employees for quantitative validation.

Strengths: Ensures the quantitative phase is grounded in real participant experiences. Useful when existing measures do not exist for the construct of interest.

Limitations: Time-intensive (two sequential phases). The quality of the quantitative phase depends entirely on the rigor of the initial qualitative work.

Mixed methods research requires careful planning to integrate both data types meaningfully. Researchers exploring this approach can benefit from reviewing AI tools for meta-analysis that demonstrate how different types of evidence are synthesized across study designs.

Experimental vs Observational vs Mixed Methods: Key Differences

| Criteria | Experimental | Observational | Mixed Methods |

|---|---|---|---|

| Intervention | Researcher manipulates variables | No manipulation; natural observation | May include intervention or observation |

| Causation | Can establish cause and effect | Identifies associations only | Causal + contextual understanding |

| Data type | Primarily quantitative | Quantitative or qualitative | Both quantitative and qualitative |

| Control | High (randomization, control groups) | Low (no intervention) | Varies by design |

| Generalizability | May be limited by artificial settings | Higher ecological validity | Depends on integration quality |

| Cost and time | Often high | Varies widely | High (two data collection methods) |

| Best for | Testing causal hypotheses | Describing patterns and prevalence | Complex questions needing depth and breadth |

The three categories are not mutually exclusive. Many rigorous studies combine elements, for example, a quasi-experimental study with a qualitative follow-up component effectively becomes a mixed methods study. The key is that each design choice must be justified by the research question and documented transparently in the methodology.

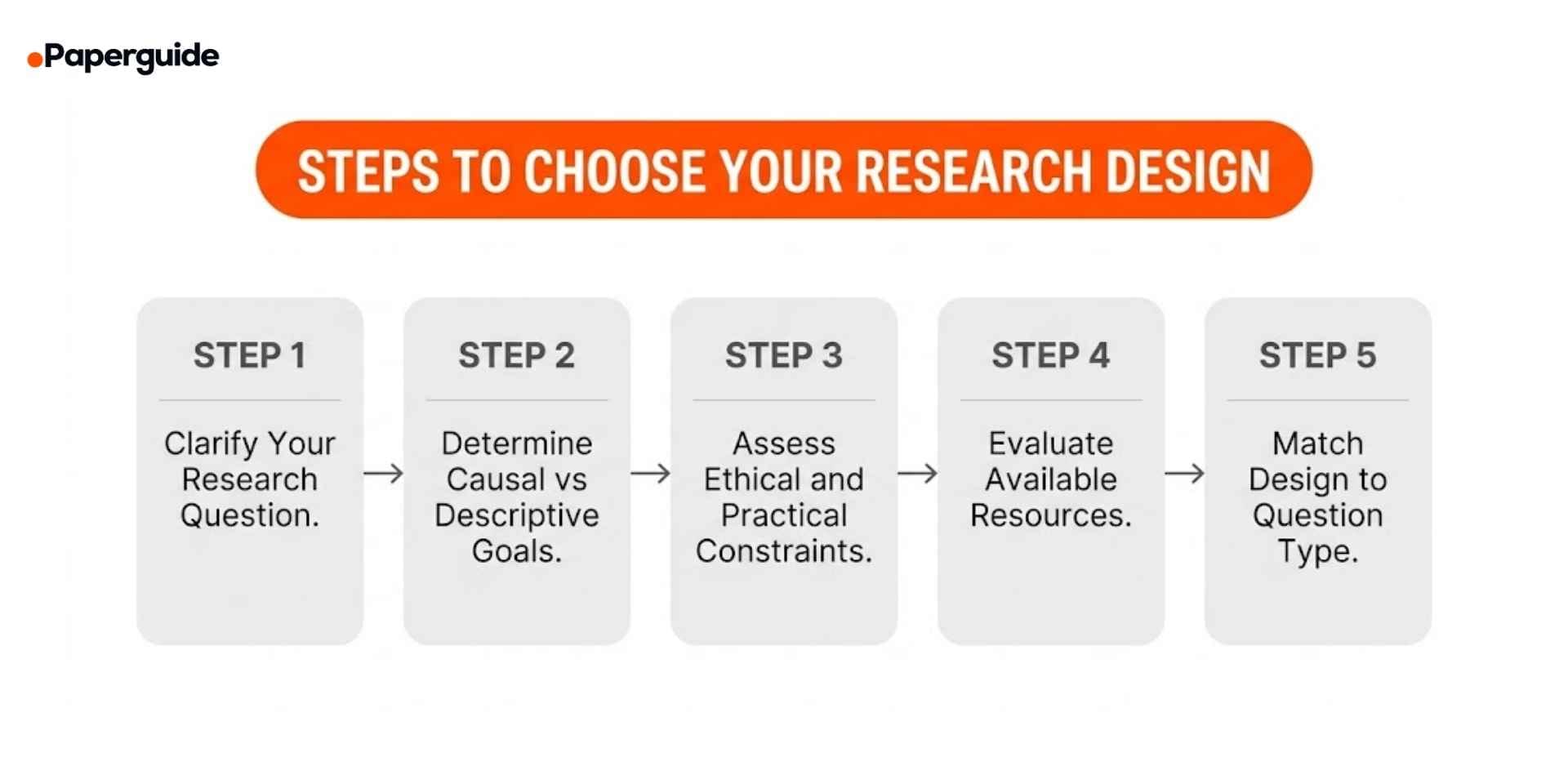

How to Choose the Right Research Design

Selecting the appropriate research design is one of the most consequential decisions in the research process. Follow this structured approach.

Step 1: Clarify Your Research Question

The structure of your research question determines the type of design you need.

- "Does X cause Y?" → Experimental design

- "Is X associated with Y?" → Observational design

- "How does X influence Y, and what do participants experience?" → Mixed methods design

If your question asks about causation, you need a design that can isolate variables. If it asks about associations or prevalence, observational designs are appropriate. If it asks about both statistical patterns and lived experience, mixed methods is indicated.

Step 2: Determine Whether You Need Causal Evidence

If your study aims to make causal claims ("this intervention causes this outcome"), you need an experimental or quasi-experimental design. If your study aims to describe patterns, relationships, or prevalence without causal claims, an observational design is sufficient.

This distinction is critical because overstating causal conclusions from observational data is one of the most common methodological errors in published research. [1]

Step 3: Assess Ethical and Practical Constraints

Some research questions cannot ethically be studied with experimental designs. You cannot randomly assign participants to harmful exposures (smoking, pollution, abuse). In these cases, observational designs are the only ethical option.

Similarly, practical constraints matter. If you cannot access a comparison group, a true experiment is not feasible. If you cannot follow participants over time, a cohort design is not viable.

Step 4: Evaluate Available Resources

Different designs have different resource requirements:

- True experiments require funding for recruitment, randomization procedures, control conditions, and often longer timelines.

- Cross-sectional surveys are relatively fast and inexpensive.

- Mixed methods studies require expertise in both quantitative and qualitative analysis, which may require multiple researchers or additional training.

Match your design to what your budget, timeline, and team can realistically support.

Step 5: Match the Design to Your Question Type

Use this decision framework:

- Causal question + ethical to manipulate → True experimental design

- Causal question + cannot randomize → Quasi-experimental design

- Association or prevalence question → Cross-sectional or cohort design

- Rare outcome + looking backward → Case-control design

- Statistical evidence + contextual understanding → Mixed methods design

- Exploring a new phenomenon → Qualitative or exploratory sequential mixed methods

Examples Across Disciplines

Example 1: Medicine (True Experimental)

Research question: "Does a new antibiotic reduce infection rates compared to standard treatment after knee replacement surgery?"

- Design: True experimental (double-blind randomized controlled trial)

- Why: Causal question. Ethical to randomize treatment. Control group receives standard care.

- Key features: Random assignment, placebo control, blinding, pre-specified outcome measures

Example 2: Sociology (Cross-Sectional Observational)

Research question: "What is the relationship between social media use and self-reported loneliness among adults aged 18 to 30?"

- Design: Cross-sectional survey

- Why: Association question. Cannot ethically manipulate social media use. Data collected at one time point.

- Limitation acknowledged: Cannot determine whether social media causes loneliness or lonely people use more social media.

Example 3: Education (Quasi-Experimental)

Research question: "Does a new math tutoring program improve test scores among middle school students?"

- Design: Quasi-experimental (non-equivalent control group)

- Why: Schools cannot randomly assign students to classes. The program is implemented in some classrooms while others serve as comparison.

- Statistical control: Propensity score matching used to reduce pre-existing group differences.

Example 4: Public Health (Explanatory Sequential Mixed Methods)

Research question: "What factors explain variation in COVID-19 vaccine uptake across rural communities?"

- Design: Explanatory sequential mixed methods

- Phase 1: Survey of 2,000 rural residents measuring uptake rates, demographic factors, and trust scores (quantitative).

- Phase 2: Interviews with 30 residents from communities with the highest and lowest uptake to understand barriers and facilitators (qualitative).

- Why: Statistical data alone cannot explain the cultural, social, and logistical factors that drive regional differences.

Example 5: Business (Cohort Observational)

Research question: "Does participation in a leadership development program predict promotion within three years?"

- Design: Prospective cohort study

- Why: Cannot ethically randomize who receives development opportunities. Instead, follows two cohorts (participants vs non-participants) over three years and compares promotion rates.

- Control for confounders: Job level, performance ratings, and tenure are included as covariates.

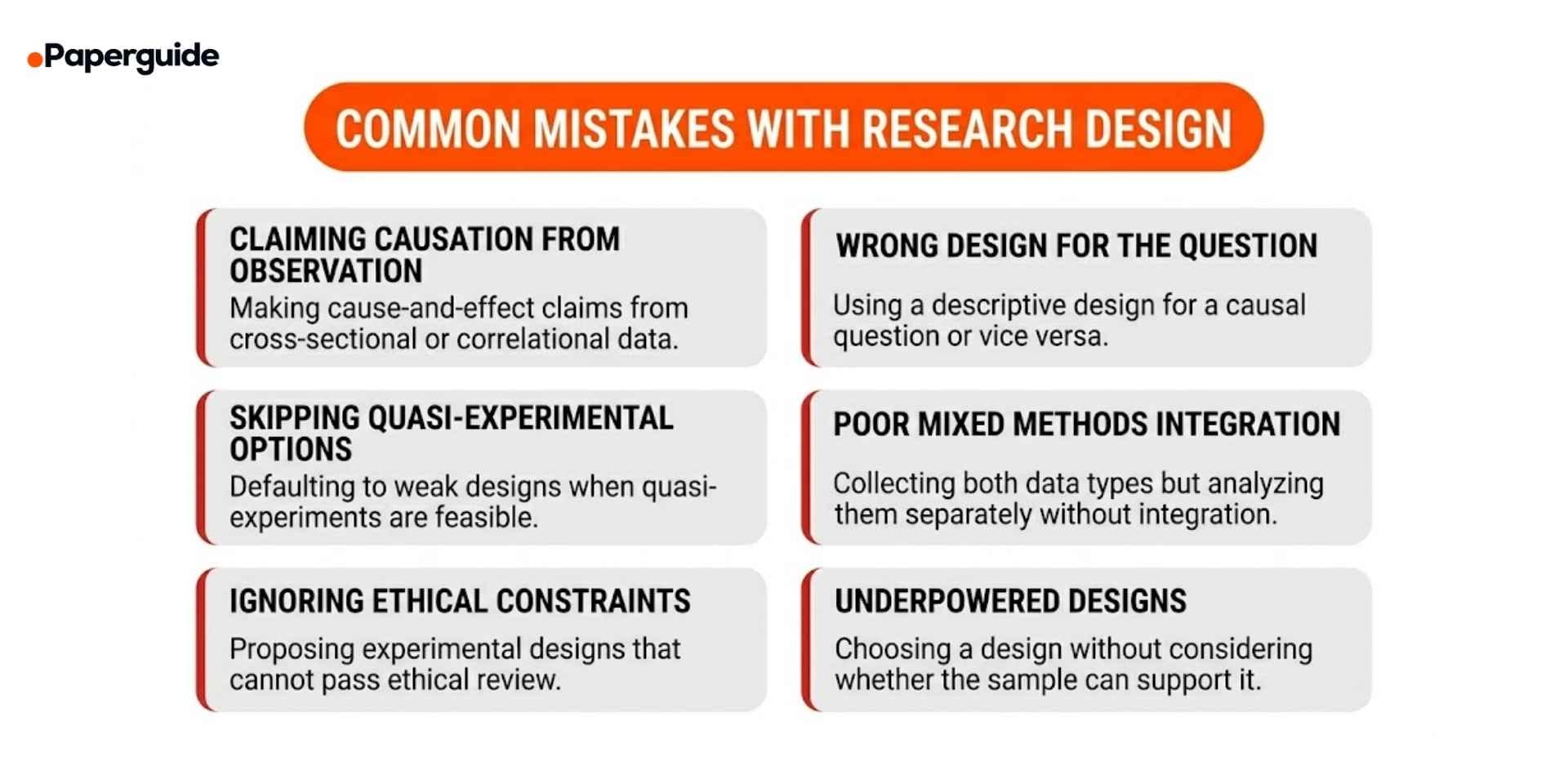

Common Mistakes and How to Fix Them

Research design errors are among the most consequential methodological problems because they affect every aspect of the study.

Mistake 1: Claiming Causation From Observational Data

Error: Using phrases like "social media causes depression" based on a cross-sectional survey that only measured correlation.

Fix: Match your language to your design. Observational studies can identify associations ("is associated with," "is correlated with") but not causation ("causes," "leads to"). Reserve causal language for experimental designs with randomization and control.

Mistake 2: Choosing the Wrong Design for the Question

Error: Using a descriptive survey to answer a causal question, or using an expensive experimental design for a question that only requires descriptive data.

Fix: Start with your research question. If it asks "does X cause Y," you need an experimental or quasi-experimental design. If it asks "what is the prevalence of X," a cross-sectional design is sufficient.

Mistake 3: Overlooking Quasi-Experimental Options

Error: Abandoning causal inquiry entirely because a true experiment is not feasible, instead of considering quasi-experimental alternatives.

Fix: When random assignment is impossible, quasi-experimental designs with statistical controls (propensity score matching, difference-in-differences, instrumental variables) can provide credible causal evidence. These approaches are increasingly accepted in fields where full randomization is impractical. [3]

Mistake 4: Poor Integration in Mixed Methods

Error: Collecting both quantitative and qualitative data but analyzing them in complete isolation, with no point of integration.

Fix: Plan the integration point before data collection. In convergent designs, specify how datasets will be merged or compared. In sequential designs, specify how the first phase informs the second. Without integration, the study is two separate studies, not mixed methods. [2]

Mistake 5: Ignoring Ethical Constraints

Error: Proposing a research design that requires exposing participants to harm, denying treatment, or violating informed consent, leading to institutional review board (IRB/ethics board rejection.

Fix: Evaluate ethical feasibility during the design phase, not after. If random assignment to a harmful condition is unethical, use an observational design or a quasi-experimental approach that compares naturally occurring groups.

Mistake 6: Underpowered Designs

Error: Selecting a complex design (e.g., factorial experiment, multi-phase mixed methods) without a sample size sufficient to support the analysis.

Fix: Conduct a power analysis before finalizing the design. If the required sample size is not attainable, simplify the design (e.g., reduce the number of experimental conditions or use a simpler mixed methods approach).

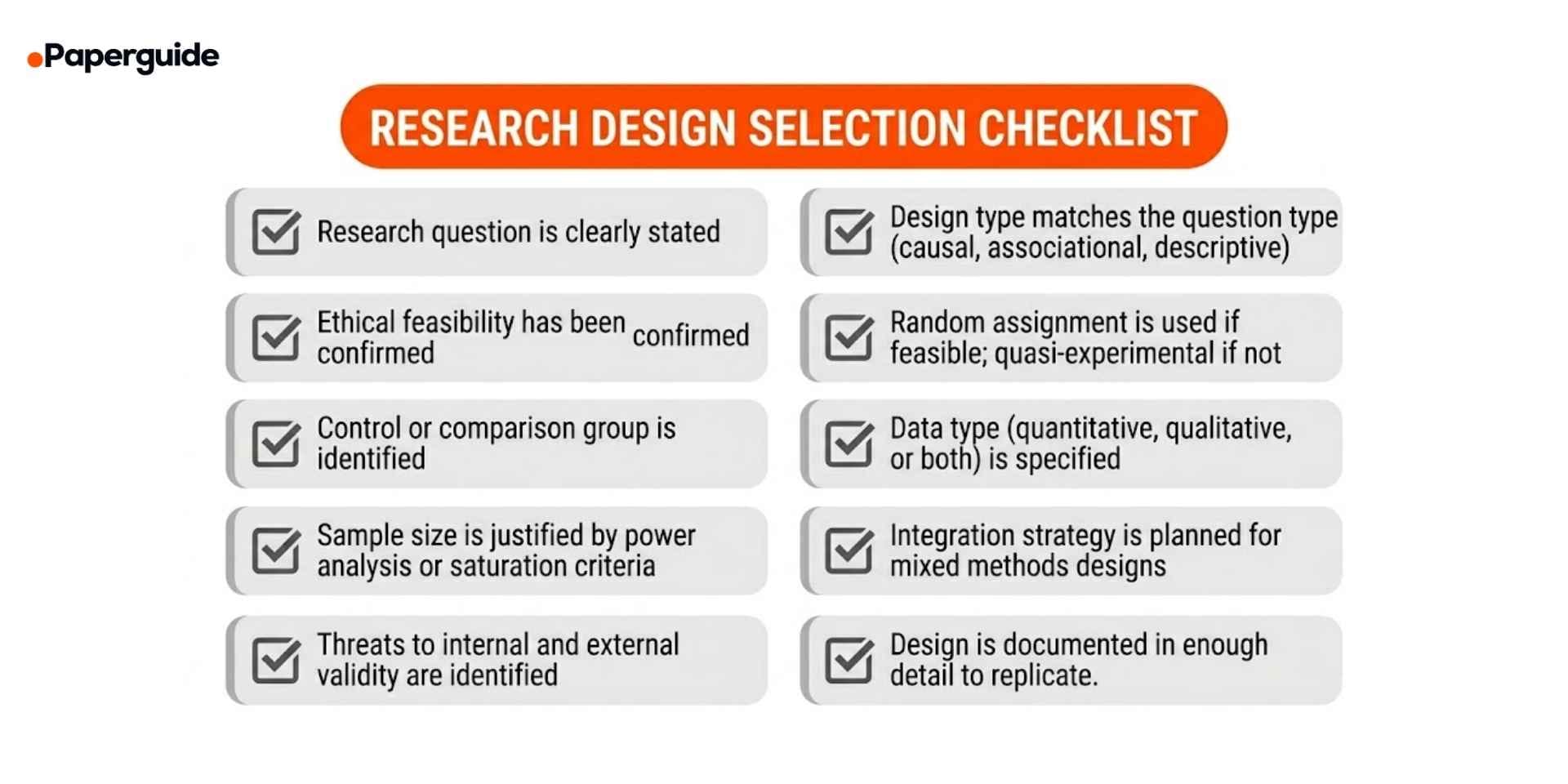

Research Design Selection Checklist

Use this checklist to verify your design choice before beginning data collection.

- [ ] Research question is clearly stated. The question specifies the variables, population, and type of answer sought.

- [ ] Design type matches the question type. Causal questions use experimental designs. Association questions use observational designs. Complex questions use mixed methods.

- [ ] Ethical feasibility has been confirmed. The design can pass or ethics committee review

- [ ] Random assignment is used if feasible. If randomization is not possible, quasi-experimental controls are in place.

- [ ] Control or comparison group is identified. The study includes a basis for comparison unless it is purely descriptive.

- [ ] Data type is specified. Quantitative, qualitative, or both are explicitly stated with corresponding collection methods.

- [ ] Sample size is justified. Power analysis (quantitative) or saturation criteria (qualitative) support the chosen sample size.

- [ ] Integration strategy is planned for mixed methods. The point and method of combining quantitative and qualitative data are specified.

- [ ] Threats to internal and external validity are identified. Potential confounders and generalizability limitations are documented.

- [ ] Design is documented in replicable detail. Another researcher could follow your description and conduct the same study.

Research Design Template

Use this template to document your research design. Replace the bracketed sections with your own content.

Research Question: [Your research question]

Design Type: [Experimental / Observational / Mixed Methods — specify subtype]

Justification: [Why this design is appropriate for your question]

Population and Sample: [Target population, sample size, sampling method]

Variables: [Independent, dependent, and control variables if applicable]

Data Collection: [Instruments, procedures, timeline]

Data Analysis: [Statistical tests, qualitative analysis methods, integration plan]

Ethical Considerations: [IRB approval status, informed consent, potential harms]

Known Limitations: [Design-specific threats to validity or generalizability]

Filled Example:

Research Question: "Does a structured peer mentoring program improve first-year college student retention and sense of belonging?"

Design Type: Explanatory sequential mixed methods with a quasi-experimental quantitative component

Justification: The question has both a causal element (does the program improve retention?) and a contextual element (how do participants experience belonging?). Random assignment is not possible because the program is offered campus-wide. Quasi-experimental comparison with non-participating students provides quantitative evidence, and interviews provide qualitative depth.

Population and Sample: First-year students at a public university (N = 1,200). Quantitative phase: 600 mentoring participants matched with 600 non-participants using propensity scores. Qualitative phase: 24 participants selected from high-engagement and low-engagement groups.

Variables: IV: Mentoring program participation. DV: Retention (enrolled in year 2, binary), Sense of belonging (measured by Sense of Belonging Scale, continuous). Controls: High school GPA, first-generation status, campus housing.

Data Collection: Phase 1: Sense of Belonging Scale administered at orientation and end of year 1. Retention data from registrar. Phase 2: Semi-structured interviews (45–60 minutes) with 24 participants after year 1.

Data Analysis: Phase 1: Propensity score matching, logistic regression (retention), paired t-test (belonging). Phase 2: Thematic analysis of interview transcripts. Integration: Qualitative themes mapped to quantitative findings (joint display table).

Ethical Considerations: IRB approved. Informed consent obtained. No deception. Interview data de-identified.

Known Limitations: Quasi-experimental design cannot fully eliminate self-selection bias. Qualitative findings are context-specific. Single-institution study limits generalizability.

Validate This With Papers (2 Minutes)

Before finalizing your research design, check how published studies with similar questions have structured their methodology. This prevents common design errors and strengthens your justification.

Step 1: Search for recent studies that address a similar research question. Focus on what design they chose and why they justified that choice in their methodology section.

Step 2: Open two or three relevant papers. Look at the research design, comparison groups, sample sizes, and how the authors addressed threats to validity. Reviewing tools for writing abstracts can help you quickly scan methodology descriptions across multiple papers by extracting their structured abstracts.

Step 3: Use an Essay Summarizer to extract the methodology section from each paper. Compare their design choices, justifications, and acknowledged limitations with yours.

This takes about two minutes and ensures your design is consistent with established practices in your field.

Conclusion

Research design is the decision that shapes every other decision in a study. Experimental designs provide the strongest evidence for causation through manipulation, control, and randomization. Observational designs describe what naturally occurs when intervention is impossible, unethical, or impractical. Mixed methods designs combine quantitative breadth with qualitative depth to address research questions that neither approach can answer alone. The choice between these three categories and the specific sub-types within each must be driven by the research question, not by convenience, tradition, or default preference.

The most consequential design mistakes are also the most avoidable. Claiming causation from observational data, choosing a design that does not match the research question, and collecting mixed methods data without an integration plan all result from insufficient planning during the design phase. Before committing to a design, run it through the selection checklist above, compare your approach with published studies in your field, and verify that your resources can support the design you have chosen. A well-justified research design does not guarantee significant findings, but it guarantees that whatever you find can withstand methodological scrutiny.

Frequently Asked Questions

What is research design?

Research design is the overall plan or framework that specifies how a study will collect, analyze, and interpret data to answer a research question. It determines the type of data collected, the methods used, the comparison groups involved, and the analytical procedures applied. It is the blueprint that connects the research question to the conclusions.

What is the difference between experimental and observational research?

Experimental research involves the researcher actively manipulating one or more variables and measuring the effect, allowing causal conclusions. Observational research involves the researcher collecting data without any manipulation, measuring what naturally occurs. Experimental designs can establish causation; observational designs can identify associations but not prove cause and effect.

When should I use mixed methods research?

Use mixed methods when your research question requires both statistical evidence (quantitative) and in-depth understanding of context, experiences, or processes (qualitative). Common situations include: evaluating an intervention while understanding participant experiences, developing a new measurement instrument, or explaining unexpected quantitative results.

What is the strongest research design for establishing causation?

A true experimental design with random assignment, a control group, and manipulation of the independent variable provides the strongest evidence for causation. When true experiments are not feasible, quasi-experimental designs with appropriate statistical controls are the next strongest option.

Can I change my research design after I start collecting data?

Changing the design mid-study is strongly discouraged because it introduces bias and threatens the validity of your conclusions. If you discover a design problem, document it transparently and address it as a limitation. For future studies, conduct a thorough design review before data collection begins.

What is the difference between cross-sectional and cohort designs?

A cross-sectional design collects data at a single point in time, providing a snapshot. A cohort design follows participants over time, tracking how exposures relate to outcomes. Cross-sectional designs are faster and cheaper but cannot establish temporal sequence. Cohort designs can show whether the exposure preceded the outcome.

How do I justify my research design choice in a paper?

In your methodology section, state the research question, explain why the chosen design is the most appropriate for that question, describe the alternatives you considered, and document why those alternatives were less suitable. Include references to published studies that used similar designs for comparable research questions.

Is qualitative research a research design?

Qualitative research is a broad approach to data collection and analysis, not a single research design. Within qualitative research, specific designs include case study, ethnography, phenomenology, grounded theory, and narrative analysis. Each has distinct procedures, sampling strategies, and analytical methods.

References

- Gerstman, B.B. "There is no single gold standard study design (RCTs are not the gold standard)." Expert Opinion on Drug Safety, 22(4), 2023.

- Gierus, B. et al. "Prevalence and Quality of Mixed Methods Research in Educational Subdisciplines: A Systematic Review." SAGE Open, 15(2), 2025.

- Capili, B.. & Anastasi, J.K. "An Introduction to the Quasi-Experimental Design (Nonrandomized Design)." The American Journal of Nursing, 124(11), 202471248(11).

- Fontana, G. et al. "The Multi-Stage Mixed Methods Framework: A new research design to combine hypothesis development and hypothesis testing and to embed machine learning and practitioner engagement in the social sciences." International Political Science Review, 47, (1), 2026.

- Fernainy, P. et al.r. "Rethinking the pros and cons of randomized controlled trials and observational studies in the era of big data and advanced methods." BMC Proceedings, 18(1), 2024.