Research Instruments: Types, Examples and How to Choose

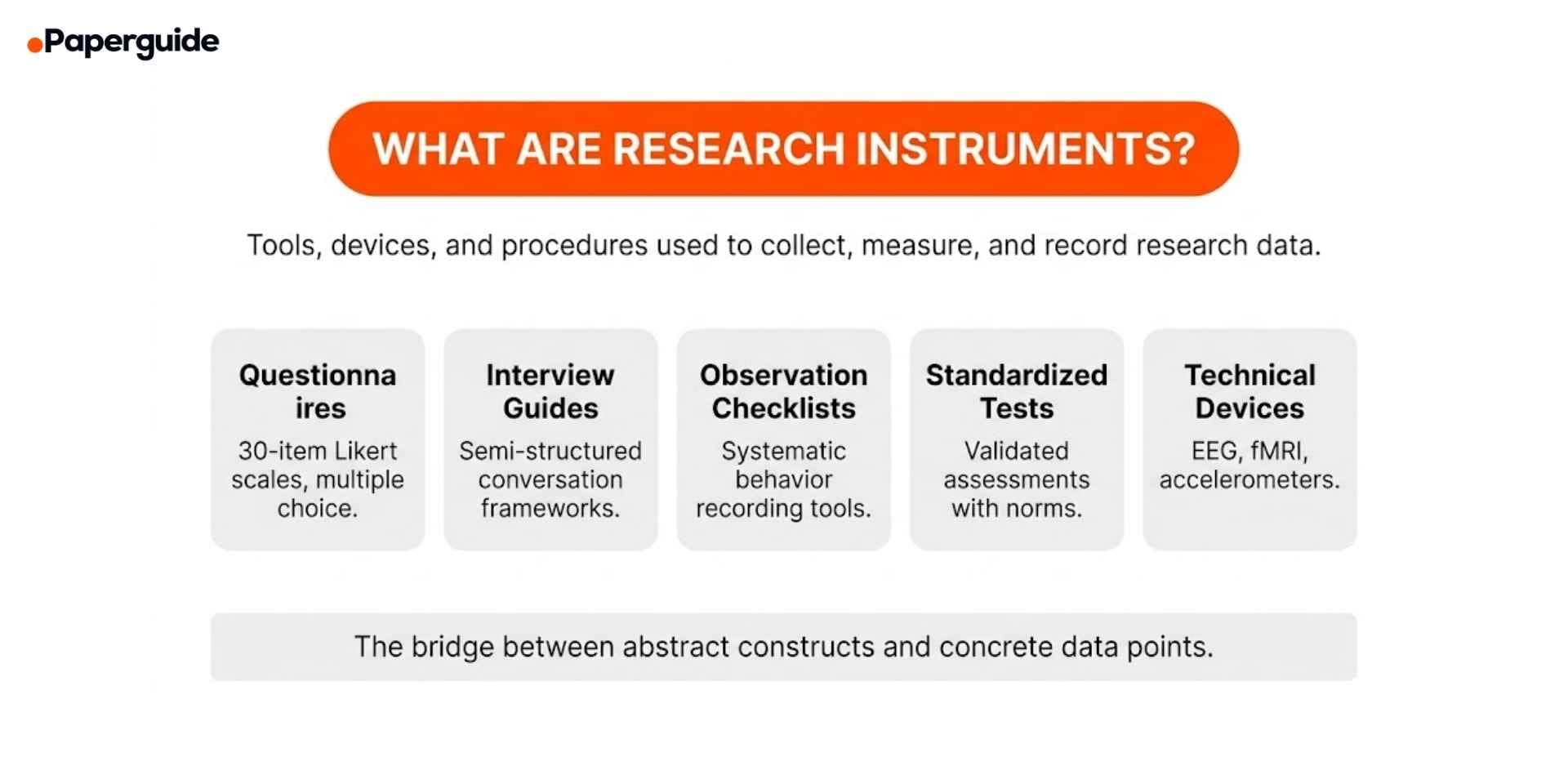

A research instrument is any tool used to collect, measure, or record data in a study. Surveys, questionnaires, interview guides, observation protocols, standardized tests, and physiological devices all fall under this category. The instrument you select determines the quality of your data, the validity of your findings, and the credibility of your conclusions.

Choosing the wrong instrument, or using a valid instrument incorrectly, is one of the most common sources of measurement error in research. A 2025 report on instrument development and psychometric evaluation found that a significant proportion of published studies fail to report essential validity and reliability evidence for the instruments they use, making it difficult for reviewers and readers to evaluate the trustworthiness of the findings. A separate 2025 study on survey research methodology found that even well-designed instruments produce unreliable data when researchers skip pilot testing or fail to adapt validated tools to their specific study population. [1] [2]

This guide explains the main types of research instruments, provides examples across disciplines, covers the development and validation process, and offers a framework for choosing the right instrument for your study.

Key Takeaways

- A research instrument is any tool used to collect or measure data. The type of instrument must match the type of data your research question requires.

- The most common instruments are questionnaires, interview guides, observation protocols, standardized tests, and physiological measurement devices.

- Validity (does the instrument measure what it claims to measure?) and reliability (does it produce consistent results?) are the two fundamental quality criteria for any instrument. [1]

- Researchers should use existing validated instruments whenever possible rather than creating new ones from scratch. Developing a new instrument requires a multi-phase process including content validation, pilot testing, and factor analysis. [3]

- Skipping pilot testing is one of the most common and most preventable errors in instrument selection. [2]

- The research question determines the instrument, not the other way around.

What Are Research Instruments?

A research instrument is a tool, device, or procedure that a researcher uses to collect data. Instruments serve as the bridge between the abstract concepts a study aims to measure (constructs) and the concrete data points that will be analyzed. In quantitative research, instruments produce numerical data through measurement. In qualitative research, instruments guide the collection of textual, visual, or observational data.

The term "research instrument" covers a wide range of tools. A 30-item Likert scale questionnaire is an instrument. A semi-structured interview guide with 10 open-ended questions is an instrument. A structured observation checklist used to record classroom behavior is an instrument. An fMRI scanner measuring brain activity is an instrument. What they share is a common purpose: systematic, replicable data collection.

The quality of any study depends on the quality of its instruments. A poorly designed questionnaire produces data that cannot be trusted, regardless of how large the sample or how sophisticated the statistical analysis. A 2024 methodological framework from GESIS found that validity in survey research depends not only on instrument design but on the entire chain from construct definition to data interpretation, and that researchers frequently address validity too narrowly by focusing only on item wording while neglecting response processes and measurement context. [3]

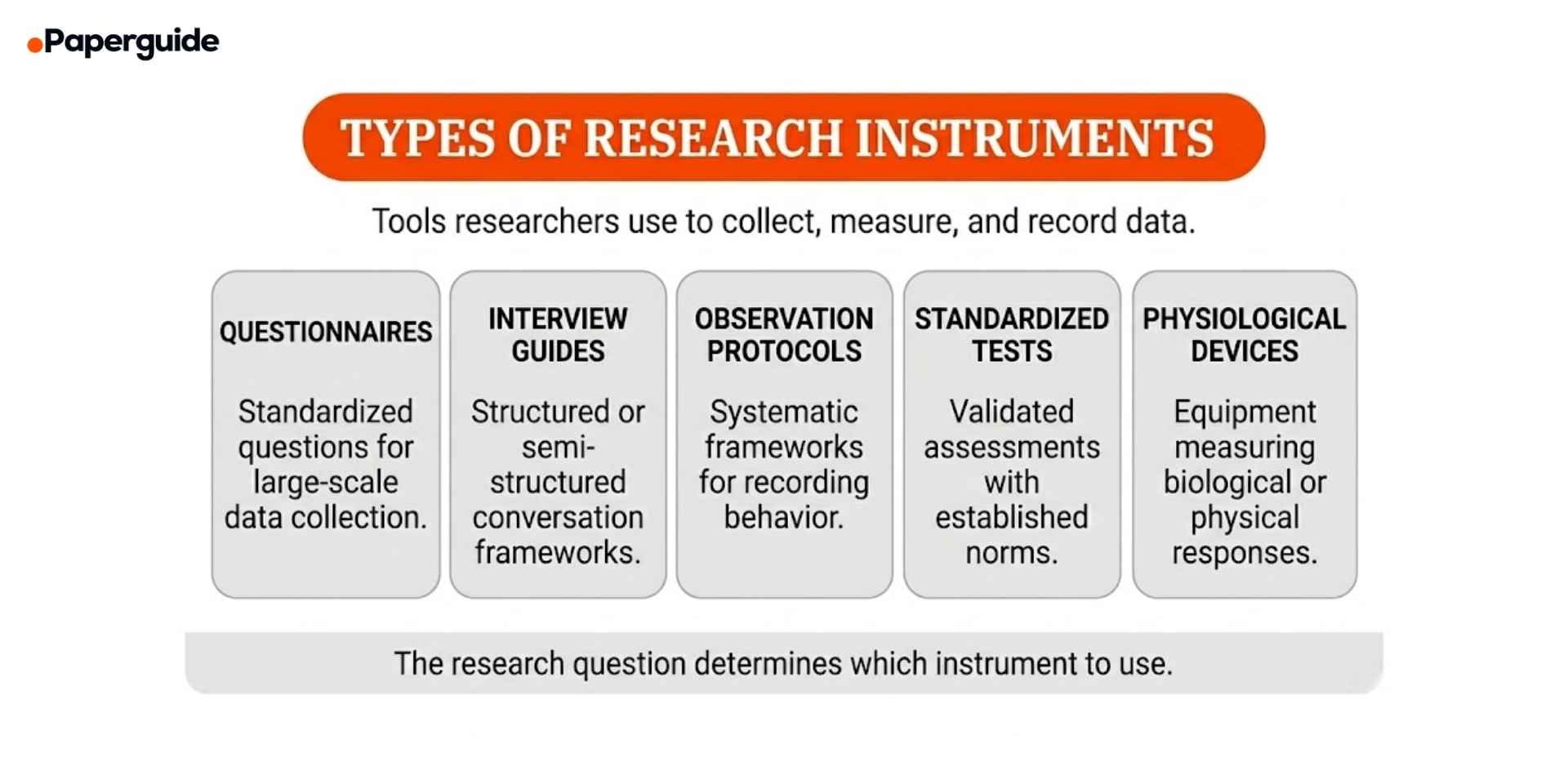

Types of Research Instruments

Questionnaires and Surveys

Questionnaires are the most widely used research instrument in social science, health, and education research. They consist of a structured set of questions designed to collect standardized responses from a sample. Items can be closed-ended (Likert scales, multiple choice, ranking) or open-ended (free text responses).

When to use: When you need to collect data from a large sample, when your constructs can be measured through self-report, and when standardized responses are needed for statistical analysis.

Example: The Patient Health Questionnaire-9 (PHQ-9) is a validated 9-item instrument measuring the severity of depression. Each item corresponds to one of the nine DSM-5 diagnostic criteria for major depressive disorder, scored on a 0 to 3 scale. The instrument has been validated across more than 200 studies and translated into over 80 languages, making it one of the most widely used mental health screening tools in the world.

Example: A researcher studying workplace burnout designs a 25-item questionnaire using the Maslach Burnout Inventory (MBI) framework. Items measure three dimensions: emotional exhaustion, depersonalization, and personal accomplishment. Each item uses a 7-point frequency scale ranging from "never" to "every day."

Interview Guides

Interview guides provide a structured or semi-structured framework for conducting research interviews. They outline the key questions, topics, and probes that the researcher will use during the conversation while allowing flexibility to follow up on unexpected responses.

When to use: When your research question asks about experiences, perceptions, decision-making processes, or meanings that cannot be captured through standardized questionnaires. Interview guides are essential when depth and context matter more than generalizability.

Example: A researcher studying how doctoral students navigate work-life balance prepares a semi-structured interview guide with 12 primary questions organized into three sections: daily routines and time management, support systems and relationships, and perceived barriers to balance. Each primary question includes two to three follow-up probes to encourage elaboration.

Example: A health researcher investigating patient experiences with telemedicine designs an interview guide with open-ended questions such as "Describe a recent telemedicine visit from start to finish" and "What aspects of the experience were different from an in-person visit?" Probes include "Can you tell me more about that?" and "How did that make you feel?"

Researchers developing interview guides often benefit from reviewing how published qualitative studies in their field structure their data collection. Tools that help generate and refine AI thesis statements can also help clarify the central focus of your interview guide by sharpening the core research question before drafting items.

Observation Protocols

Observation protocols are systematic frameworks for recording behavior, interactions, or events in natural or controlled settings. They specify what to observe, how to record it, and what categories or codes to use.

When to use: When you need to document actual behavior rather than self-reported behavior, when context and interaction patterns matter, or when participants cannot accurately describe their own actions.

Example: The Classroom Assessment Scoring System (CLASS) is a validated observation protocol used to assess teacher-student interactions across three domains: emotional support, classroom organization, and instructional support. Trained observers rate each domain on a 1 to 7 scale based on 15-minute observation cycles.

Example: A workplace researcher creates a structured observation protocol to study interruption patterns in open-plan offices. The protocol specifies: observation intervals (every 30 minutes), behavior categories (initiated conversation, received interruption, phone use, focused work), duration recording (start and end time of each event), and a coding scheme for interruption type (work-related, social, administrative).

Standardized Tests

Standardized tests are instruments with established administration procedures, scoring rules, and normative data. They allow researchers to compare individual scores against population-level benchmarks.

When to use: When you need to measure cognitive abilities, academic achievement, personality traits, or clinical symptoms using instruments with established psychometric properties and population norms.

Example: The Wechsler Adult Intelligence Scale (WAIS-IV) measures cognitive ability across four index scores: verbal comprehension, perceptual reasoning, working memory, and processing speed. It has been normed on a representative sample of the population and is widely used in clinical and research settings.

Example: The Beck Depression Inventory-II (BDI-II) is a 21-item self-report instrument measuring the severity of depression symptoms. Each item is scored 0 to 3, with total scores categorized as minimal (0 to 13), mild (14 to 19), moderate (20 to 28), and severe (29 to 63). The instrument has established cut-off scores validated against clinical diagnoses.

Physiological and Technical Devices

These instruments measure biological, physical, or technical variables using specialized equipment. They produce objective, quantifiable data that is not subject to self-report bias.

When to use: When your research question requires measurement of biological processes, physical responses, or environmental conditions that cannot be assessed through self-report or observation.

Example: A neuroscience researcher uses electroencephalography (EEG) to measure electrical brain activity while participants complete a decision-making task. The instrument records voltage fluctuations at 64 electrode sites across the scalp, producing continuous time-series data that is analyzed for event-related potentials.

Example: A sports science researcher uses accelerometers attached to participants' wrists and ankles to measure physical activity levels over a seven-day period. The devices record movement data continuously, producing objective measures of activity intensity, duration, and sedentary time that are more accurate than self-reported physical activity questionnaires.

Comparison of Research Instruments

| Instrument | Data Type | Best For | Sample Size | Key Strength |

|---|---|---|---|---|

| Questionnaire | Quantitative | Attitudes, behaviors, prevalence | Large (100+) | Standardization and scalability |

| Interview Guide | Qualitative | Experiences, meanings, processes | Small (12-30) | Depth and contextual richness |

| Observation Protocol | Both | Actual behavior, interactions | Varies | Captures real behavior |

| Standardized Test | Quantitative | Abilities, traits, clinical symptoms | Large | Normed scores and benchmarks |

| Physiological Device | Quantitative | Biological and physical processes | Small to medium | Objective, bias-free measurement |

Validity and Reliability: The Two Quality Standards

Every research instrument must demonstrate two fundamental properties: validity and reliability. Without both, the data an instrument produces cannot be trusted.

Validity

Validity asks: does this instrument actually measure what it claims to measure? A questionnaire designed to measure anxiety should measure anxiety, not general stress or depression.

Content validity evaluates whether the instrument covers all relevant aspects of the construct. Expert review panels typically assess content validity by rating each item for relevance and representativeness.

Construct validity evaluates whether the instrument measures the theoretical construct it is intended to measure. This is assessed through factor analysis, convergent validity (correlation with related measures), and discriminant validity (low correlation with unrelated measures).

Criterion validity evaluates whether the instrument's scores predict or correlate with an external criterion. Predictive validity measures future outcomes, while concurrent validity measures correlation with an established instrument administered at the same time.

Reliability

Reliability asks: does this instrument produce consistent results? A reliable instrument yields similar scores when administered to the same participants under the same conditions.

Internal consistency measures whether items within the instrument are measuring the same construct. Cronbach's alpha is the most commonly reported metric, with values above 0.70 generally considered acceptable.

Test-retest reliability measures stability over time by administering the instrument to the same participants on two occasions and correlating the scores. High correlation indicates the instrument produces stable measurements.

Inter-rater reliability measures agreement between different observers or raters using the same instrument. Cohen's kappa or intraclass correlation coefficients are standard metrics. A 2025 study on validity and reliability in qualitative research emphasized that inter-rater reliability is particularly critical for observation protocols and qualitative coding schemes, where subjective judgment plays a larger role than in standardized questionnaires. [4]

How to Develop a New Research Instrument

Developing a new research instrument should only be done when no existing validated instrument measures your construct. The process is resource-intensive and requires multiple validation phases. A 2025 study in BMC Medical Research Methodology outlined the standard multi-phase development process and emphasized that researchers frequently underestimate the time required, particularly for content validation and factor analysis stages. [5]

Phase 1: Define the Construct

Clearly define what the instrument will measure. Review existing literature to identify the theoretical dimensions of the construct. For example, if you are measuring "academic resilience," you need to identify its sub-dimensions (persistence, adaptive coping, help-seeking behavior) based on existing theory.

Phase 2: Draft Initial Items

Write items that cover all dimensions of the construct. Generate more items than you will need in the final version (typically two to three times as many). Each item should measure one concept only. Avoid double-barreled questions ("Do you feel stressed and anxious?"), leading questions, and jargon.

Phase 3: Expert Review

Submit the draft items to a panel of three to five experts in the field. Experts rate each item for clarity, relevance, and representativeness. Items that receive low ratings are revised or removed.

Phase 4: Content Validation

Calculate the Content Validity Index (CVI) based on expert ratings. Items with a CVI below 0.78 are typically revised or eliminated. The overall scale CVI should be above 0.80.

Phase 5: Pilot Test

Administer the instrument to a small sample (30 to 50 participants) representative of the target population. Analyze response patterns, completion time, and participant feedback. Identify items that are confusing, produce uniform responses, or do not correlate with the total scale score.

Phase 6: Reliability Analysis

Administer the revised instrument to a larger sample (minimum 150 participants for exploratory factor analysis). Calculate Cronbach's alpha for the total scale and each subscale. Examine item-total correlations and remove items that fall below 0.30.

Phase 7: Factor Analysis

Conduct exploratory factor analysis (EFA) to confirm the dimensional structure of the instrument. If the factor structure matches your theoretical model, proceed to confirmatory factor analysis (CFA) with a separate sample (minimum 200 participants) to verify the structure. Report factor loadings, model fit indices, and variance explained.

Researchers reviewing published instruments in their field can use AI research title generators to explore how other studies frame their instrument development work, which helps when positioning your own validation study in the literature.

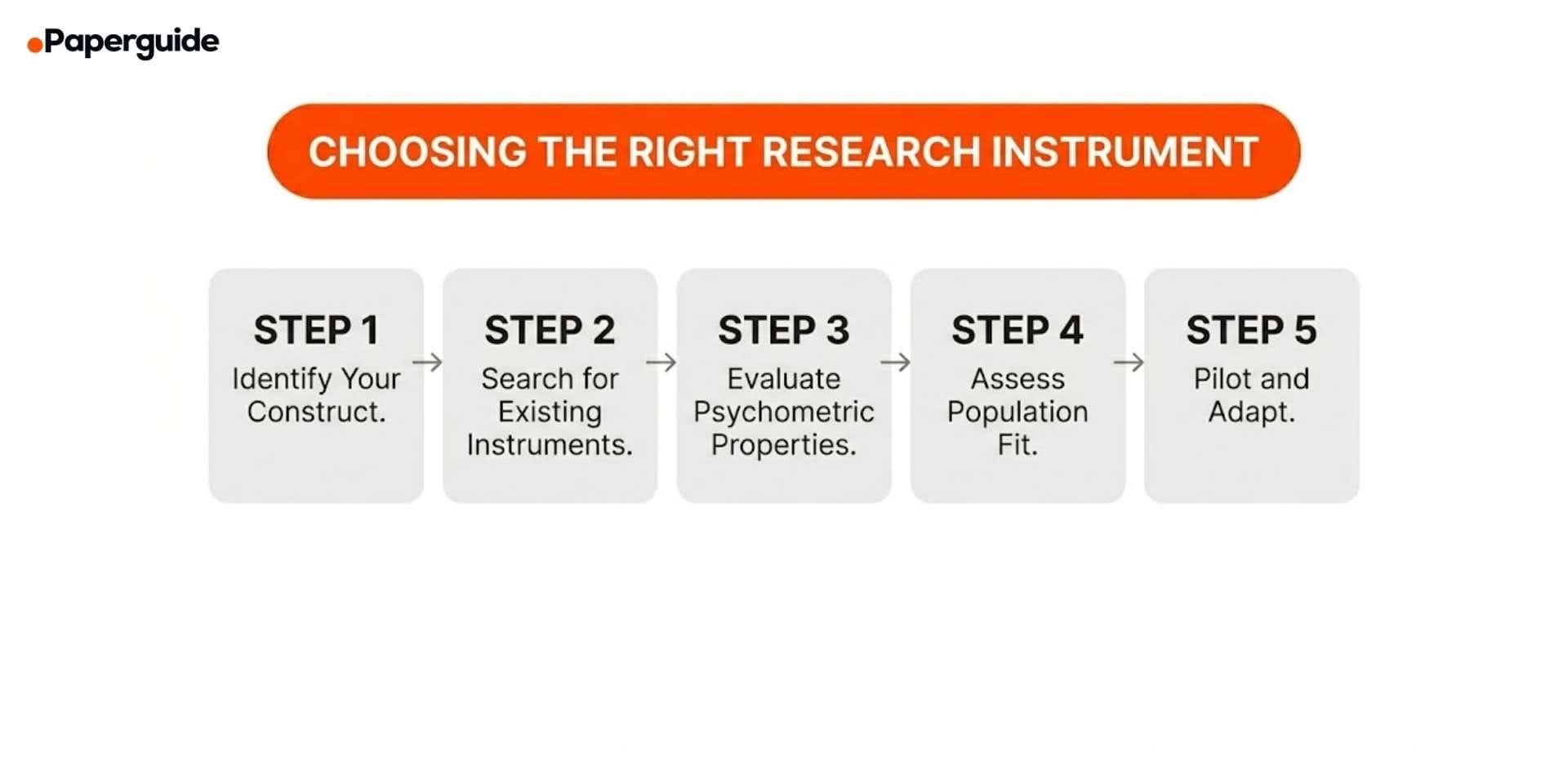

How to Choose the Right Research Instrument

Step 1: Identify Your Construct

Clearly define what you need to measure. Is it an attitude, a behavior, a skill, a clinical symptom, or a physiological process? The construct determines the type of instrument you need.

Step 2: Search for Existing Validated Instruments

Before developing a new instrument, search the literature for existing validated tools. Check psychometric databases, published validation studies, and systematic reviews of measurement instruments in your field. Using an existing validated instrument saves months of development time and produces data that is comparable across studies.

Step 3: Evaluate Psychometric Properties

For any instrument you consider, review the published evidence for validity (content, construct, criterion) and reliability (internal consistency, test-retest, inter-rater). Check whether the validation sample is similar to your target population. An instrument validated with college students in the United States may not be valid for elderly participants in rural India.

Step 4: Assess Population and Context Fit

Consider whether the instrument is appropriate for your specific population. Language, cultural context, literacy level, and administration format (paper vs. digital) all affect instrument performance. If you need to adapt an existing instrument for a different population, plan for translation, back-translation, and re-validation.

Step 5: Pilot and Adapt

Even when using an established instrument, always pilot it with a small sample from your target population before full data collection. Check for comprehension issues, unexpected response patterns, and administration problems. Make adjustments based on pilot results and document any changes.

Common Mistakes and How to Fix Them

Mistake 1: Creating a New Instrument When Validated Ones Exist

Error: Developing a custom questionnaire from scratch when published, validated instruments already measure the same construct.

Fix: Always search the literature first. Check databases like PsycTESTS, the Mental Measurements Yearbook, and systematic reviews of instruments in your field. Using an existing validated instrument is faster, produces comparable data, and carries stronger psychometric evidence than a newly created tool.

Mistake 2: Skipping Pilot Testing

Error: Distributing a questionnaire or using an interview guide in full data collection without testing it first on a small sample.

Fix: Pilot every instrument with 10 to 50 participants from your target population before full deployment. Check for confusing wording, unexpected response distributions, completion time issues, and technical problems with digital delivery.

Mistake 3: Ignoring Cultural and Contextual Fit

Error: Using an instrument developed and validated in one cultural context with a population from a completely different context without re-validation.

Fix: When adapting instruments across cultures or languages, follow established translation and adaptation protocols. Conduct forward translation, back-translation, expert review, and cognitive interviewing. Then re-validate the adapted instrument with a sample from the target population.

Mistake 4: Reporting Only Reliability Without Validity

Error: Reporting Cronbach's alpha as the sole evidence of instrument quality while neglecting construct validity, content validity, and criterion validity.

Fix: Report multiple forms of validity evidence alongside reliability. Include content validity index scores from expert review, factor analysis results for construct validity, and correlations with related measures for criterion validity. Reliability without validity means an instrument consistently measures the wrong thing.

Mistake 5: Writing Double-Barreled Items

Error: Combining two concepts in a single item: "The training program was well-organized and engaging." A respondent who found it organized but not engaging cannot answer accurately.

Fix: Each item should measure one concept only. Split double-barreled items into separate items: "The training program was well-organized" and "The training program was engaging."

Mistake 6: Not Reporting Psychometric Evidence in Publications

Error: Using an instrument in a study but failing to report any validity or reliability evidence in the methods section.

Fix: Always report the psychometric properties of every instrument used in your study. Include the original validation reference, reliability coefficients from your own sample, and any adaptations you made. This allows readers and reviewers to evaluate the trustworthiness of your data.

Research Instrument Selection Checklist

- [ ] Construct is clearly defined. The variable being measured is specified with its theoretical dimensions.

- [ ] Literature search for existing instruments is completed. Validated tools have been identified and reviewed before considering new development.

- [ ] Psychometric evidence is evaluated. Validity (content, construct, criterion) and reliability (internal consistency, test-retest) are reviewed for each candidate instrument.

- [ ] Population fit is assessed. The instrument has been validated with a population similar to your target sample, or adaptation is planned.

- [ ] Pilot testing is completed. The instrument has been tested with a small sample and revised based on feedback.

- [ ] Administration procedures are standardized. Clear instructions, consistent delivery format, and time requirements are documented.

- [ ] Scoring procedures are specified. Scoring rules, cut-off points, and interpretation guidelines are established before data collection.

- [ ] Ethical considerations are addressed. Sensitive items are reviewed, informed consent covers all measurement procedures, and data storage is secure.

- [ ] Psychometric reporting is planned. Validity and reliability evidence will be reported in the methods section of the final publication.

- [ ] Instrument permissions are obtained. Copyright holders have granted permission for use, and any licensing fees are accounted for.

Researchers comparing instruments across studies benefit from using AI essay writing tools to organize and synthesize findings from multiple validation studies into a coherent instrument selection rationale for their methodology section.

Validate This With Papers (2 Minutes)

Before finalizing your instrument choice, check how published studies in your field have used the same or similar instruments. This confirms that your selection is consistent with disciplinary standards.

Step 1: Search for recent studies that measured the same construct you are investigating. Note which instruments they used, how they justified that choice, and what psychometric evidence they reported.

Step 2: Open two or three relevant validation studies. Look at the methodology section for sample characteristics, validity evidence, and reliability coefficients. Reviewing published instrument comparisons with AI research title generators can help you quickly locate validation studies by searching for instrument names alongside your target construct.

Step 3: Use a Plagiarism Checker to verify that any adapted instrument items maintain original wording while preserving the validated construct measurement. This is especially important when modifying existing instruments for a new population.

This takes about two minutes and ensures your instrument choice is well-supported by existing evidence.

Conclusion

Research instruments are the foundation of data quality. A study is only as strong as the tools used to collect its data. Questionnaires measure attitudes and behaviors at scale. Interview guides capture the depth and context that numbers cannot. Observation protocols document what people actually do. Standardized tests provide normed benchmarks. Physiological devices produce objective biological measurements.

The most important principle is to use existing validated instruments whenever possible. Developing a new instrument requires months of work across multiple validation phases, and even then, it will have less psychometric evidence than established tools. When you must create a new instrument, follow the full development process from construct definition through factor analysis. When you use an existing instrument, evaluate its psychometric properties, assess its fit for your population, and pilot it before full deployment. Strong instruments produce strong data, and strong data produces credible research.

Frequently Asked Questions

What is a research instrument?

A research instrument is any tool used to collect, measure, or record data in a study. This includes questionnaires, interview guides, observation protocols, standardized tests, and physiological measurement devices. The instrument provides a systematic, replicable method for gathering the information needed to answer the research question.

What is the difference between validity and reliability in research instruments?

Validity refers to whether an instrument measures what it claims to measure. Reliability refers to whether it produces consistent results across repeated administrations. An instrument can be reliable without being valid (consistently measuring the wrong thing), but it cannot be valid without being reliable. Both properties are required for trustworthy data.

Should I create my own research instrument or use an existing one?

Use an existing validated instrument whenever possible. Existing instruments have established psychometric evidence, allow comparison across studies, and save significant development time. Only develop a new instrument when no existing tool measures your specific construct in your target population.

How do I know if a research instrument is valid?

Check the published validation studies for the instrument. Look for evidence of content validity (expert review), construct validity (factor analysis, convergent and discriminant validity), and criterion validity (correlation with external criteria). Also check whether the validation sample is similar to your target population.

What is pilot testing and why is it important?

Pilot testing involves administering your instrument to a small sample (10 to 50 participants) from your target population before full data collection. It reveals problems with item wording, response patterns, completion time, and administration procedures that cannot be detected through expert review alone. Skipping pilot testing is one of the most common causes of unusable data.

How many participants do I need to validate a new instrument?

For pilot testing, 30 to 50 participants are typically sufficient. For exploratory factor analysis, a minimum of 150 participants is recommended. For confirmatory factor analysis, at least 200 participants are needed. These minimums depend on the number of items and the expected factor structure of the instrument.

References

- Souza, A. C., et al. (2025). Key points in writing instrument development and psychometric evaluation reports. BMC Medical Research Methodology.

- Aamodt, A. H., et al. (2025). Survey research in genetic counseling. Journal of Genetic Counseling.

- Repke, L., Birkenmaier, J., & Lechner, C. (2024). Validity in survey research: From research design to measurement. GESIS Survey Guidelines.

- Ochieng, P. (2025). Ensuring validity and reliability in qualitative research. BMC Medical Research Methodology.

- Khamis, R., et al. (2025). Development, validation, and usage of metrics to evaluate the quality of clinical research hypotheses. BMC Medical Research Methodology.